Many Hardware Security Modules (HSMs) use smart cards to store cryptographic material, export the Storage Master Key (SMK), application keys, and authenticate Security Officers and Crypto Officers.

These smartcards are often stored in Tamper Evident Bags (TEB) to provide a chain of custody and prove that no-one has read or otherwise tampered with the card. Unfortunately this is not secure; it’s trivial to read the card through the TEB in a way that is almost undetectable.

If you need to mail a smart card, or store it in a safe, it should be placed in a hard-shell case, which should be sealed with tamper evident seals, and then placed in a TEB. My standard suggestion for this was to use (clear) PCMCIA card holders and foil tamper evident seals, but it is increasingly hard to find the clear PCMCIA card holders – the Pelican 0915 SD Memory Card Case or Pelican 1040 Micro Case both look like they might work (the SD Card case doesn’t have a clear front, which is unfortunate).

This is surprisingly easy to do. I had done this in 2010, but since I deleted the writeup, I am redoing it here.

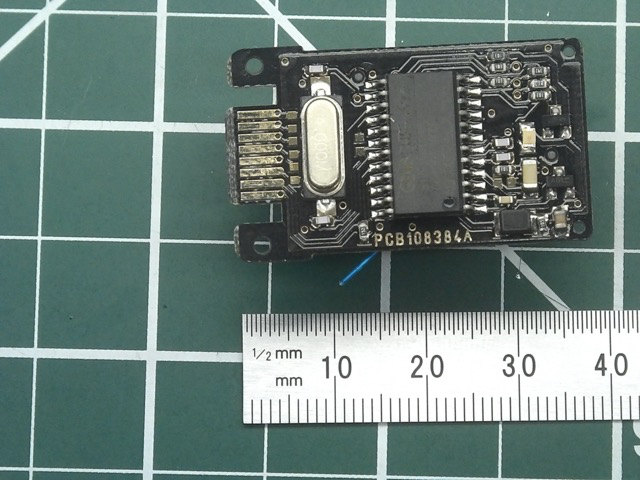

An HSM smart card is just a “standard” smart card and the contact layout is almost exactly the same as an 8-pin DIP (dual in-line package). I took a cheap USB smart card reader ($20 on Amazon), and removed it from its packaging.

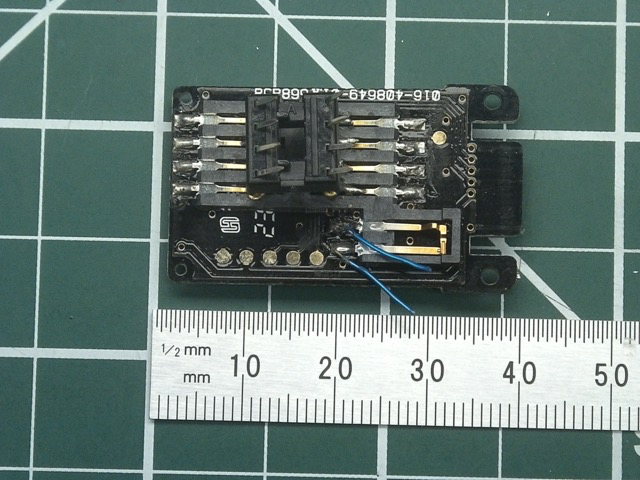

I soldered a cheap 8-pin DIP socket onto the reader slide contacts (the “cheap” through-hole DIP sockets work better than the better quality milled ones, as the tips are sharper). Smart card readers have a switch which is activated when the card in inserted (bottom right of the second picture above) – I bridged across this with a small pushbutton, to allow me to activate it on demand.

The “points” of the DIP socket can now be placed outside the bag, just above the contacts. Pressing down and “wiggling” the reader will make the points pierce the bag, and make contact with the smart card, allowing the card to be read through the bag – these holes are tiny, and difficult to see, especially if done carefully and above the bag label.

If you know where to look, this attack is detectable – a better resourced attacker could easily replace the (large) 8 pin DIP with something like 7X Tungsten Cat Whisker Fine Probes. I only have much larger die probes (and only a small number of these), but even with these the plastic seems to self-heal after poking them through.

In summary, don’t just trust a tamper evident bad – they are primarily designed for protecting deposits, or chain-of-custody of evidence, not protecting something like a smart card. Instead, seal the smart card in a hard-shell case, place numbered and signed tamper evident seals on all sides of the case, and then place this entire set in a (numbered and signed) tamper evident bag.

I’ve put a video demonstrating reading the card here: https://www.youtube.com/watch?v=oMDpXdDU1G4&feature=youtu.be

Output:

Tue Mar 24 09:57:49 2020

Reader 0: Gemalto PC Twin Reader 00 00

Event number: 49

Card state: Card inserted,

ATR: 3B 2A 00 80 65 A2 01 02 01 31 72 D6 43

ATR: 3B 2A 00 80 65 A2 01 02 01 31 72 D6 43

+ TS = 3B --> Direct Convention

+ T0 = 2A, Y(1): 0010, K: 10 (historical bytes)

TB(1) = 00 --> VPP is not electrically connected

+ Historical bytes: 80 65 A2 01 02 01 31 72 D6 43

Category indicator byte: 80 (compact TLV data object)

Tag: 6, len: 5 (pre-issuing data)

Data: A2 01 02 01 31

Tag: 7, len: 2 (card capabilities)

Selection methods: D6

- DF selection by full DF name

- DF selection by partial DF name

- DF selection by file identifier

- Short EF identifier supported

- Record number supported

Data coding byte: 43

- Behaviour of write functions: write OR

- Value 'FF' for the first byte of BER-TLV tag fields: invalid

- Data unit in quartets: 8

Possibly identified card (using /usr/share/pcsc/smartcard_list.txt):

3B 2A 00 80 65 A2 01 02 01 31 72 D6 43

3B 2A 00 80 65 A2 01 .. .. .. 72 D6 43

Gemplus MPCOS EMV 4 Byte sectors

3B 2A 00 80 65 A2 01 02 01 31 72 D6 43

MPCOS-EMV 64K Functional Sample

THALES nShield Security World

THALES NCIPHER product line

Tue Mar 24 09:57:50 2020

Reader 0: Gemalto PC Twin Reader 00 00

Event number: 50

Card state: Card removed,

Tue Mar 24 09:57:51 2020

Reader 0: Gemalto PC Twin Reader 00 00

Event number: 51

Card state: Card inserted,

ATR: 3B 2A 00 80 65 A2 01 02 01 31 72 D6 43

ATR: 3B 2A 00 80 65 A2 01 02 01 31 72 D6 43

+ TS = 3B --> Direct Convention

+ T0 = 2A, Y(1): 0010, K: 10 (historical bytes)

TB(1) = 00 --> VPP is not electrically connected

+ Historical bytes: 80 65 A2 01 02 01 31 72 D6 43

Category indicator byte: 80 (compact TLV data object)

Tag: 6, len: 5 (pre-issuing data)

Data: A2 01 02 01 31

Tag: 7, len: 2 (card capabilities)

Selection methods: D6

- DF selection by full DF name

- DF selection by partial DF name

- DF selection by file identifier

- Short EF identifier supported

- Record number supported

Data coding byte: 43

- Behaviour of write functions: write OR

- Value 'FF' for the first byte of BER-TLV tag fields: invalid

- Data unit in quartets: 8

Possibly identified card (using /usr/share/pcsc/smartcard_list.txt):

3B 2A 00 80 65 A2 01 02 01 31 72 D6 43

3B 2A 00 80 65 A2 01 .. .. .. 72 D6 43

Gemplus MPCOS EMV 4 Byte sectors

3B 2A 00 80 65 A2 01 02 01 31 72 D6 43

MPCOS-EMV 64K Functional Sample

THALES nShield Security World

THALES NCIPHER product line

Tue Mar 24 09:57:51 2020

Reader 0: Gemalto PC Twin Reader 00 00

Event number: 52

Card state: Card removed